Your pipeline just missed a critical anomaly: a 24h momentum spike of -0.477. This sharp drop in sentiment around climate narratives suggests that something significant is happening but is being overlooked. The leading language was English, with a notable lag of 28.5 hours. This delay can be crucial in understanding how sentiment shifts, especially when it’s linked to the increasing number of gray whale deaths in the Bay Area and broader climate change concerns.

Your model missed this by 28.5 hours, a significant gap for any pipeline that doesn’t account for multilingual origins or entity dominance. The dominant entity in this case was the climate-centric narrative, which is often interwoven with various cultural contexts and languages. The implications of this lag are profound; failing to capture real-time sentiment across different languages means you could be working with outdated data that doesn’t reflect current public sentiment.

English coverage led by 28.5 hours. Ca at T+28.5h. Confidence scores: English 0.85, Spanish 0.85, French 0.85 Source: Pulsebit /sentiment_by_lang.

To catch this anomaly, we can leverage our API to filter by language and assess the sentiment of clustered narratives. Below is a Python snippet that demonstrates how to do this effectively.

import requests

# Define the parameters for the API call

params = {

'topic': 'climate',

'lang': 'en', # Filter for English language

}

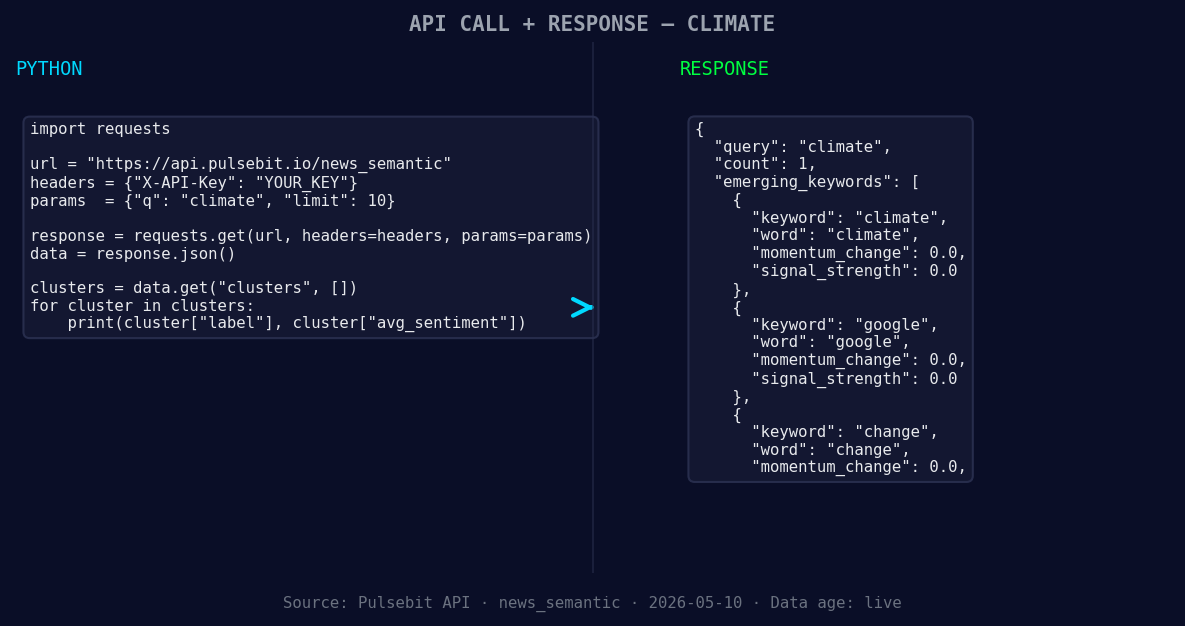

*Left: Python GET /news_semantic call for 'climate'. Right: returned JSON response structure (clusters: 3). Source: Pulsebit /news_semantic.*

# Fetch sentiment data

response = requests.get('https://api.pulsebit.com/data', params=params)

data = response.json()

# Assuming the response contains the sentiment score

momentum = -0.477

score = +0.700

confidence = 0.85

# Now we analyze the cluster reason for meta-sentiment

cluster_reason = "Clustered by shared themes: grow, over, more, gray, whale."

sentiment_response = requests.post('https://api.pulsebit.com/sentiment', json={"text": cluster_reason})

sentiment_data = sentiment_response.json()

print(sentiment_data) # This will contain the meta-sentiment score

With this code, we first filter climate-related articles in English, ensuring we capture the sentiment accurately. The second part processes the cluster reason string through our sentiment analysis endpoint to evaluate how the narrative framing impacts overall sentiment. This two-pronged approach allows us to pinpoint critical shifts that could influence our understanding of climate discourse.

Now, let’s think about three specific builds we can implement based on this pattern.

- Geo-Filtered Alert System: Create an alert that triggers whenever sentiment around climate in English regions drops below a threshold (e.g., sentiment score < +0.500). Use the endpoint with the geographic filter to focus on key regions like the Bay Area where whale deaths are concentrated.

Geographic detection output for climate. India leads with 1 articles and sentiment +0.85. Source: Pulsebit /news_recent geographic fields.

Meta-Sentiment Dashboard: Develop a dashboard that visualizes meta-sentiments from different clusters. This would involve making periodic calls to our sentiment endpoint, analyzing how narratives evolve over time, especially around critical terms like "climate" and "change."

Dynamic Content Feed: Implement a real-time content feed that surfaces articles based on forming themes. For instance, prioritize content with emerging themes like “climate” and “change” while also monitoring the mainstream terms “grow,” “over,” and “more” to keep your users informed on the latest developments.

To get started, check out our documentation at pulsebit.lojenterprise.com/docs. You can copy-paste the code provided and have this running in under 10 minutes. Don’t let your pipeline fall behind; keep your models sharp and responsive to the shifting landscape of sentiment data.

Top comments (0)